How to Ensure Quality Control in Large-Scale Video Annotation Projects

How to Ensure Quality Control in Large-Scale Video Annotation Projects

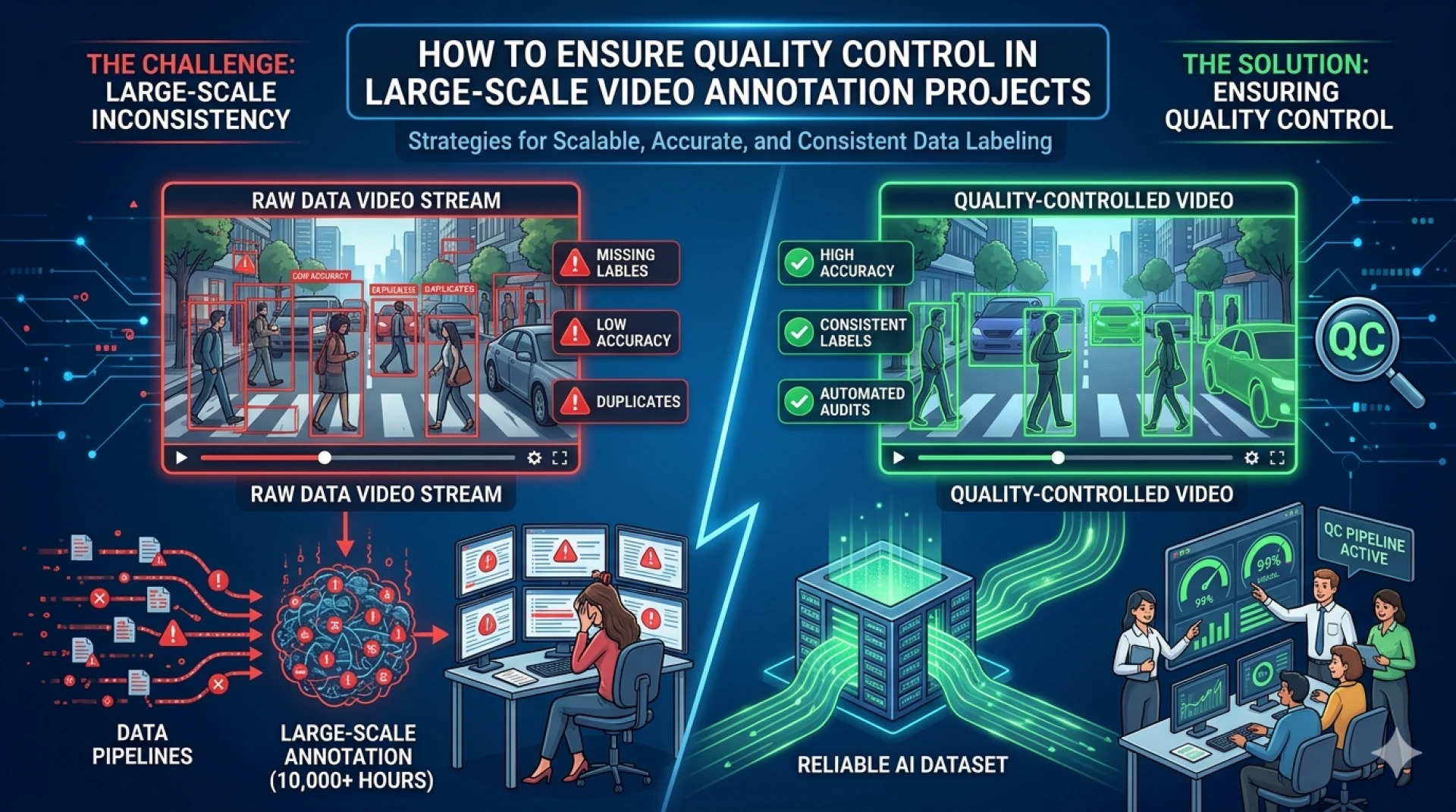

In the era of artificial intelligence and machine learning, high-quality annotated video data has become the backbone of intelligent systems. From autonomous vehicles and surveillance systems to healthcare monitoring and retail analytics, video datasets fuel the performance of advanced AI models. However, as projects scale in size and complexity, maintaining consistent quality across thousands or even millions of frames becomes a significant challenge. For businesses relying on precise training data, robust quality control is not optional—it is essential.

At Annotera, we understand that large-scale video annotation projects demand a strategic blend of expertise, process discipline, and technology. As a trusted video annotation company, we help organizations achieve annotation accuracy at scale through proven quality control frameworks. This article explores the key methods and best practices to ensure quality control in large-scale video annotation projects.

Why Quality Control Matters in Video Annotation

Video annotation involves labeling moving objects, actions, events, and temporal sequences across multiple frames. Unlike image annotation, video data introduces an additional layer of complexity because objects must be tracked consistently over time.

A minor labeling inconsistency in one frame can create cascading errors across an entire video sequence, negatively affecting model performance. Poor annotation quality often leads to:

- Reduced model accuracy

- Higher false positives and false negatives

- Increased retraining costs

- Project delays

- Poor real-world AI outcomes

This is why partnering with a reliable data annotation company becomes critical for enterprises handling large-scale AI initiatives.

Establish Clear Annotation Guidelines

The foundation of quality control starts with detailed annotation guidelines. Every annotator involved in the project must follow a standardized set of instructions to ensure uniformity.

These guidelines should include:

- Object definitions and class taxonomy

- Boundary drawing rules

- Occlusion handling instructions

- Frame transition protocols

- Tracking ID assignment methods

- Event labeling definitions

- Edge-case examples

For example, in a traffic surveillance project, the guidelines should clearly specify how to label partially visible vehicles, pedestrians behind obstacles, or fast-moving motorcycles across frames.

A professional video annotation outsourcing partner like Annotera develops project-specific documentation to reduce ambiguity and ensure every annotator interprets labels consistently.

Use Multi-Level Quality Review Processes

One of the most effective ways to maintain annotation quality at scale is to implement a multi-tier review workflow.

A typical quality control hierarchy includes:

Level 1: Primary Annotation

Initial annotation is performed by trained specialists.

Level 2: Peer Review

A second annotator reviews the labels for consistency, tracking continuity, and classification accuracy.

Level 3: Quality Audit Team

A dedicated quality assurance team performs random and rule-based audits on annotated batches.

This layered review process helps identify both human errors and systematic inconsistencies early in the workflow.

For large-scale projects, a leading video annotation company often integrates automated validation checks with manual review to improve efficiency.

Leverage Automated Quality Checks

Automation plays a crucial role in quality control, especially in high-volume projects. While human expertise remains indispensable, automated systems help detect repetitive errors faster.

Common automated quality checks include:

- Missing frame annotations

- Broken object tracking sequences

- Duplicate labels

- Incorrect class IDs

- Bounding box overlap errors

- Temporal continuity mismatches

AI-assisted validation tools can flag anomalies where an object suddenly disappears without justification or where tracking IDs change unexpectedly.

At Annotera, we combine intelligent validation tools with human oversight to maintain high precision across enterprise-scale datasets.

Train and Continuously Upskill Annotators

Annotation quality is directly linked to annotator expertise. Even the best workflow can fail without a skilled workforce.

A reliable data annotation outsourcing provider invests heavily in annotator training programs that include:

- Domain-specific onboarding

- Tool proficiency training

- Annotation simulations

- Edge-case handling workshops

- Continuous feedback loops

For instance, annotating medical videos requires a completely different skill set compared to retail surveillance footage or sports analytics videos.

Continuous learning ensures that teams stay aligned with evolving project requirements and industry standards.

Use Gold Standard Datasets for Benchmarking

Gold standard datasets are pre-annotated sample videos reviewed and approved by domain experts. These datasets serve as a benchmark to measure annotator performance.

Before assigning large production batches, annotators should work on gold standard samples. Their output can then be compared against the benchmark to assess:

- Accuracy rate

- Consistency score

- Tracking precision

- Error frequency

This process helps identify skill gaps early and improves overall quality.

As an experienced video annotation company, Annotera uses benchmark datasets extensively to calibrate teams before project deployment.

Implement Sampling-Based Audits

In large-scale annotation projects, reviewing every single frame manually may not always be practical. Sampling-based audits help maintain efficiency without compromising quality.

Quality teams can review:

- Random frame samples

- High-complexity video segments

- Edge-case heavy sequences

- Low-confidence outputs

For example, auditing 10–15% of the total dataset at defined intervals can reveal recurring issues and process bottlenecks.

If error rates exceed the predefined threshold, the entire batch can be sent back for correction.

This approach is commonly adopted in professional video annotation outsourcing workflows to balance speed and precision.

Maintain Consistency Across Large Teams

One of the biggest challenges in large-scale projects is maintaining consistency when multiple teams work simultaneously.

To solve this, quality managers should establish:

- Centralized guideline repositories

- Version-controlled annotation manuals

- Regular calibration sessions

- Weekly feedback reviews

- Inter-annotator agreement checks

Inter-annotator agreement (IAA) is particularly important. It measures how consistently different annotators label the same video sequence.

High IAA scores indicate strong process consistency, which directly contributes to reliable AI model training.

Monitor Performance Through KPIs

Quality control becomes more effective when it is driven by measurable performance indicators.

Key quality KPIs include:

- Annotation accuracy percentage

- Frame-level error rate

- Rework percentage

- Tracking consistency score

- Turnaround time

- Reviewer rejection rate

By monitoring these metrics regularly, project managers can identify trends, optimize workflows, and improve team performance.

At Annotera, we provide clients with transparent quality dashboards to ensure complete visibility into project progress and accuracy benchmarks.

Use Scalable Project Management Systems

Large-scale video annotation projects require seamless coordination across teams, reviewers, and clients.

A robust project management framework helps streamline:

- Task allocation

- Progress tracking

- Review cycles

- Quality reports

- Issue resolution

Workflow automation tools also ensure that reviewed tasks move efficiently between annotation and QA stages.

As a dependable data annotation company, Annotera leverages scalable management systems to support enterprise-grade annotation projects with fast turnaround and consistent quality.

Partner With the Right Annotation Experts

Ultimately, quality control in large-scale video annotation depends on the expertise of the service provider.

Choosing an experienced video annotation company ensures access to:

- Skilled annotation specialists

- Advanced QA workflows

- Automated validation systems

- Industry-specific expertise

- Scalable delivery models

At Annotera, our quality-first approach helps businesses confidently scale their AI initiatives through accurate, consistent, and reliable video annotation services.

Conclusion

Quality control is the cornerstone of successful large-scale video annotation projects. Without it, even the most advanced AI models can fail due to inconsistent or inaccurate training data.

From detailed guidelines and multi-level reviews to automated checks and continuous workforce training, every layer of the process contributes to better outcomes.

As a trusted partner in data annotation outsourcing and video annotation outsourcing, Annotera is committed to delivering precision-driven video annotation services tailored for enterprise AI needs.

When scale meets quality, innovation thrives—and that is exactly what Annotera helps businesses achieve.

0 comments

Log in to leave a comment.

Be the first to comment.