Ethical Challenges in LLM Training Data Collection

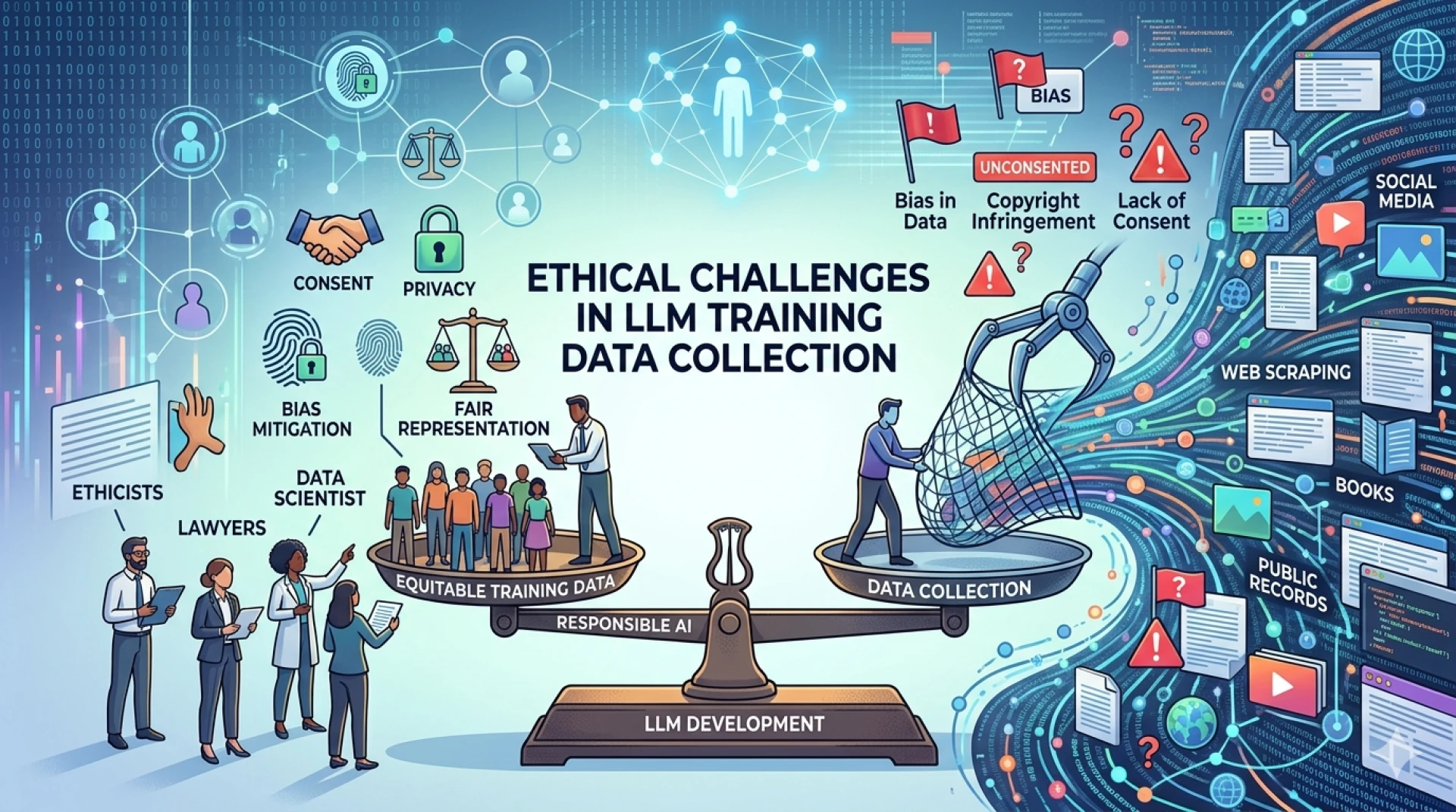

Ethical Challenges in LLM Training Data Collection

Large Language Models (LLMs) are transforming how businesses operate, enabling automation, personalization, and intelligent decision-making at scale. However, behind every high-performing model lies a vast ecosystem of training data—often sourced, curated, and annotated under complex and sometimes contentious conditions. As organizations increasingly rely on LLMs, ethical considerations in training data collection are no longer optional—they are foundational.

At Annotera, we recognize that building responsible AI systems starts with ethically sourced, high-quality data. This article explores the key ethical challenges in LLM training data collection and how organizations can address them through structured processes, responsible governance, and expert-driven annotation strategies.

The Foundation: Why Ethics in Data Collection Matters

The phrase “garbage in, garbage out” is particularly relevant in the context of LLMs. The quality, diversity, and integrity of training data directly influence model behavior. This is closely tied to How High-Quality Training Data Impacts LLM Performance—not just in terms of accuracy, but also fairness, bias mitigation, and trustworthiness.

Ethical lapses in data collection can lead to:

- Biased or discriminatory outputs

- Privacy violations

- Legal and regulatory risks

- Erosion of user trust

As a leading data annotation company, Annotera emphasizes that ethical data practices are not a compliance checkbox—they are a competitive advantage.

1. Data Privacy and Consent

One of the most pressing ethical challenges is ensuring that data used for LLM training respects user privacy and consent. Much of the data used to train models is scraped from publicly available sources, but “public” does not always equate to “ethically usable.”

Key Concerns:

- Lack of explicit user consent

- Inclusion of personally identifiable information (PII)

- Misuse of sensitive data (health, financial, or personal communications)

Best Practices:

- Implement robust data filtering pipelines to remove PII

- Use consent-based datasets wherever possible

- Align with global regulations such as GDPR and CCPA

At Annotera, our data annotation outsourcing workflows include multi-layered privacy checks to ensure compliance and ethical integrity.

2. Bias and Representation

Bias in training data is one of the most widely discussed ethical issues in AI. LLMs trained on unbalanced datasets may reinforce stereotypes or marginalize certain groups.

Types of Bias:

- Cultural bias

- Gender and racial bias

- Socioeconomic bias

Impact:

Biased models can produce harmful or misleading outputs, especially in sensitive applications like hiring, healthcare, or legal advisory.

Mitigation Strategies:

- Curate diverse and representative datasets

- Use bias detection tools during preprocessing

- Incorporate human-in-the-loop validation

Through our RLHF Annotation Services, Annotera ensures that human feedback plays a critical role in identifying and correcting biased outputs.

3. Data Ownership and Intellectual Property

Another ethical gray area is the ownership of data used in LLM training. Many datasets include copyrighted materials such as books, articles, and proprietary content.

Challenges:

- अस्पष्ट licensing agreements

- Unauthorized use of copyrighted data

- Legal disputes over content ownership

Ethical Approach:

- Use licensed or open-source datasets

- Maintain clear documentation of data sources

- Implement audit trails for dataset usage

As a responsible data annotation company, Annotera prioritizes transparency in data sourcing and ensures that all datasets used in training pipelines adhere to legal and ethical standards.

4. Transparency and Accountability

Organizations often struggle with maintaining transparency in how training data is collected, processed, and used. This lack of visibility can lead to mistrust among users and stakeholders.

Key Issues:

- अस्पष्ट data pipelines

- Lack of explainability in model decisions

- Difficulty in auditing data sources

Solutions:

- Maintain detailed data lineage records

- Provide model documentation (e.g., model cards)

- Enable third-party audits

Annotera integrates transparent workflows in its data annotation outsourcing services, allowing clients to trace every step of the data lifecycle.

5. Labor Ethics in Data Annotation

Behind every labeled dataset are human annotators—often working under challenging conditions. Ethical concerns around labor practices in data annotation are gaining increasing attention.

Concerns:

- Low wages and lack of fair compensation

- Exposure to harmful or distressing content

- Lack of recognition and career growth

Ethical Practices:

- Ensure fair wages and safe working environments

- Provide mental health support for annotators

- Offer training and upskilling opportunities

Annotera is committed to ethical labor practices, ensuring that our annotation workforce is treated with dignity, fairness, and respect.

6. Data Quality vs. Scale Trade-Offs

In the race to build larger models, organizations often prioritize data volume over quality. However, this approach can exacerbate ethical issues.

Risks:

- Inclusion of noisy or harmful data

- Amplification of biases

- Reduced model reliability

Balanced Approach:

- Focus on curated, high-quality datasets

- Use iterative validation and cleaning processes

- Combine automated and human review mechanisms

This reinforces the principle that How High-Quality Training Data Impacts LLM Performance goes beyond metrics—it shapes the ethical foundation of AI systems.

7. Challenges in RLHF (Reinforcement Learning From Human Feedback)

RLHF Annotation Services play a crucial role in aligning LLM outputs with human values. However, this process introduces its own ethical complexities.

Issues:

- Subjectivity in human feedback

- Annotator bias influencing model behavior

- Inconsistent labeling standards

Best Practices:

- Standardize annotation guidelines

- Use diverse annotator pools

- Continuously evaluate feedback quality

Annotera’s RLHF Annotation Services are designed to minimize subjectivity while maximizing alignment with ethical and contextual expectations.

8. Cultural Sensitivity and Global Context

LLMs are deployed globally, but training data often reflects a narrow cultural perspective. This can lead to outputs that are inappropriate or offensive in certain contexts.

Ethical Considerations:

- भाषा and cultural nuances

- Region-specific norms and values

- Localization challenges

Approach:

- Incorporate multilingual and multicultural datasets

- Use region-specific annotation teams

- Continuously evaluate model outputs across geographies

Annotera ensures that its data annotation outsourcing processes account for cultural diversity, enabling globally relevant AI systems.

Conclusion: Building Ethical AI Starts With Data

Ethical challenges in LLM training data collection are multifaceted, spanning privacy, bias, labor practices, and transparency. Addressing these challenges requires more than technical solutions—it demands a commitment to responsible AI development.

As a trusted data annotation company, Annotera empowers organizations to build ethical, high-performing LLMs through:

- Rigorous data curation and validation

- Scalable and transparent annotation workflows

- Expert-driven RLHF Annotation Services

Ultimately, ethical data practices are not just about avoiding risks—they are about building AI systems that users can trust. And in a world increasingly shaped by intelligent systems, that trust is invaluable.

0 comments

Log in to leave a comment.

Be the first to comment.