Draft: My Post TitleHow to Evaluate a Data Annotation Outsourcing Provider: A Practical Checklist

Draft: My Post TitleHow to Evaluate a Data Annotation Outsourcing Provider: A Practical Checklist

As AI-driven products move from experimentation to real-world deployment, the need for high-quality labeled data has never been more urgent. Models today learn from massive and diverse datasets, and the accuracy of those datasets directly determines the performance of machine learning systems. This has pushed organizations to partner with specialized data annotation outsourcing companies that can scale quickly, deliver consistent quality, and ensure operational efficiency.

However, choosing the right partner is far from simple. The market is crowded with vendors offering a wide range of services, tools, and pricing models. Without a structured evaluation approach, companies risk selecting providers that cause delays, inflate costs, or compromise data accuracy. At Annotera, we have supported leading AI teams across industries, and we understand what separates a reliable annotation partner from an unreliable one.

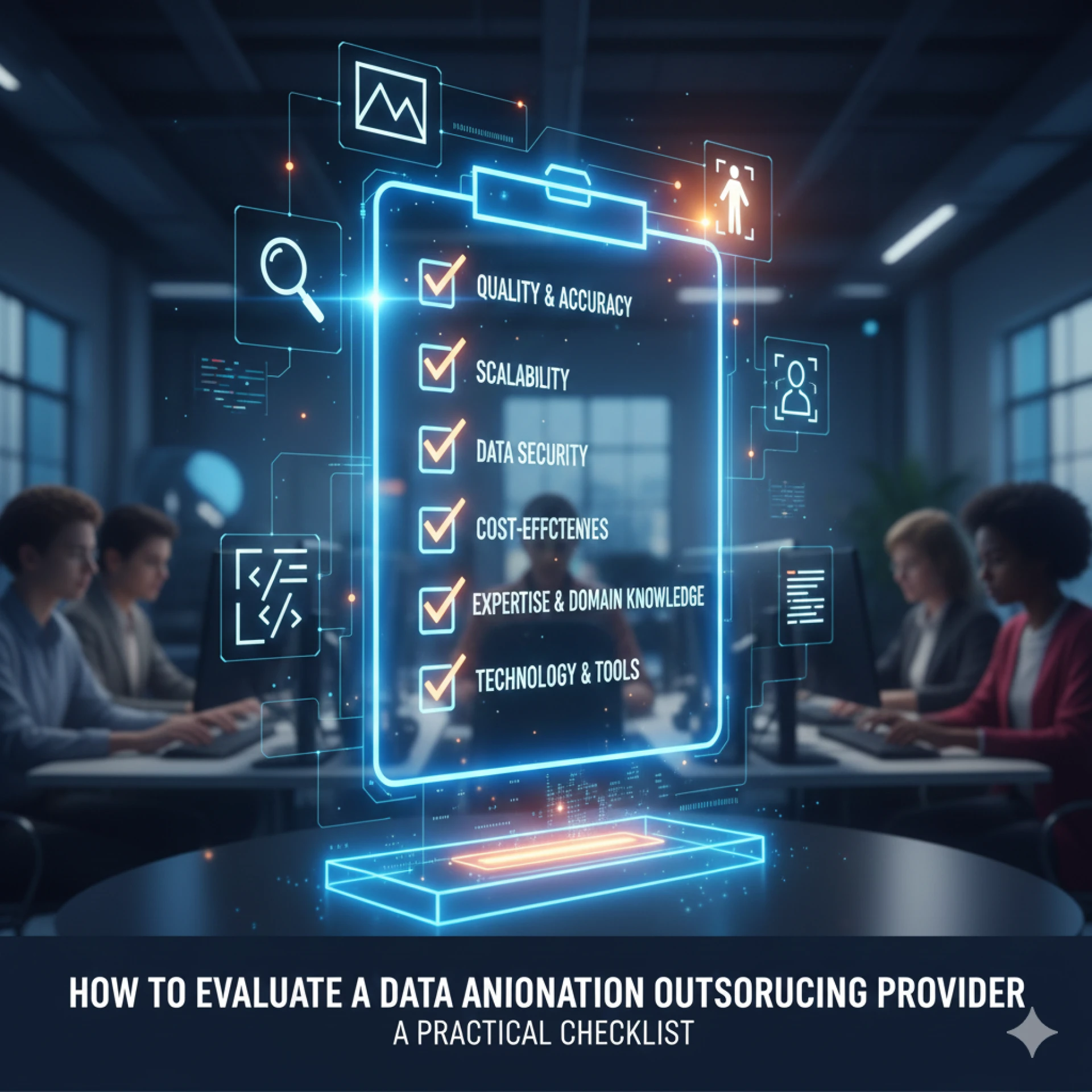

This practical checklist will guide you through the key criteria to consider while choosing a data annotation outsourcing provider, ensuring you make an informed, future-ready decision.

1. Assess Their Domain Expertise and Training Capabilities

The best annotation providers do more than label data—they understand the context in which the data will be used. Whether you are building autonomous systems, generative AI tools, medical models, or retail automation, domain expertise dramatically impacts labeling accuracy.

Evaluate the provider’s capability by checking:

- Experience with similar datasets and annotation types

- Domain-specific knowledge among annotators

- Ability to customize annotation guidelines

- In-house or external subject-matter experts

- Provided training programs to ensure annotator proficiency

A strong provider invests in continuous learning cycles so their workforce can adapt to evolving model requirements. At Annotera, our annotators receive structured onboarding, domain training, and periodic calibration to maintain consistent quality across projects.

2. Examine Their Quality Assurance Framework

Errors in annotation can lead to cascading failures—misclassification, bias, hallucinations, and reduced model trust. That’s why analyzing the vendor’s quality management processes is essential.

A high-quality provider should offer:

- Multi-layer QA systems (review, audit, verification)

- Defined accuracy metrics such as precision, recall, F1-score, or IoU

- Real-time quality monitoring dashboards

- Root-cause analysis workflows to correct recurring issues

- Reviewer escalation paths for complex cases

Ask vendors to walk you through their QA pipelines and provide sample reports. A competent partner will be transparent about their quality thresholds and demonstrate how they prevent drifts during large-scale annotation.

Annotera follows a structured Annotation Quality Framework that includes guideline refinement, double-blind reviews, automated consistency checks, and continuous feedback loops—ensuring every dataset meets enterprise-grade accuracy.

3. Evaluate Their Security, Compliance, and Data Governance Standards

Annotation often involves sensitive datasets—user conversations, images, financial details, or proprietary business information. A provider must demonstrate robust security practices and compliance readiness.

Here is what to check:

- ISO/IEC certifications, particularly ISO 27001

- GDPR, HIPAA, SOC 2, or other regulatory adherence

- Data residency and storage protocols

- Access controls, authentication layers, and NDA enforcement

- Secure VPNs or VDI environments for annotators

- Audit logs and breach-response playbooks

Data protection should not be negotiable. Annotera maintains enterprise-grade security infrastructure and strict compliance frameworks to protect our clients' sensitive information from unauthorized access or misuse.

4. Review Their Workforce Model and Scalability

Your annotation needs may grow as your AI models expand. A good outsourcing partner should scale seamlessly without compromising quality or timelines.

Key considerations include:

- Size and stability of the annotator workforce

- Availability of multilingual or domain-specific talent

- Workforce distribution—on-site, remote, hybrid

- Ability to scale up or down based on demand

- Annotator retention and training processes

A provider that relies heavily on temporary or loosely managed workforces may struggle to maintain consistency. Annotera’s distributed yet tightly managed workforce model enables clients to scale from small pilot projects to large, continuous annotation pipelines with ease.

5. Check Technology Stack and Tooling Flexibility

Annotation tools significantly impact efficiency, turnaround speed, and quality. A reliable provider should either supply robust in-house tools or be able to work with your preferred platform.

Evaluate the vendor’s technological capability by assessing:

- Their annotation tool's features (automation, pre-labeling, version control)

- Support for complex annotation types (3D, LiDAR, polygons, sentiment layers, OCR, etc.)

- Ability to integrate with your MLOps pipeline

- API availability for data flows

- Compatibility with cloud environments

- Tool performance for large datasets

Technology should enable, not limit, your workflows. Annotera’s advanced annotation platform supports multi-format datasets, real-time QA, dynamic guideline updates, and automated labeling assistance for faster turnarounds.

6. Analyze Communication, Project Management, and Transparency

Smooth collaboration determines the long-term success of outsourced annotation. Vendors must operate as an extension of your internal team, not as a detached service provider.

Important markers of strong communication include:

- Dedicated project managers

- Clear escalation protocols

- Regular syncs, reports, and performance metrics

- Transparent timelines and progress tracking

- Ability to adapt quickly to guideline changes

A provider should proactively identify potential issues rather than waiting for clients to spot them. Annotera’s project managers maintain consistent alignment with stakeholders and offer detailed visibility into every stage of the annotation lifecycle.

7. Compare Pricing Models and Real Cost Efficiency

Low-cost vendors may appear attractive initially but often lead to poor-quality outputs, rework expenses, and delays. Instead of evaluating cost in isolation, consider the provider's value-to-quality ratio.

Factors to review include:

- Pricing transparency

- Cost variations based on annotation types

- Minimum volume requirements

- Hidden fees or tool charges

- Billing flexibility (hourly, per-label, subscription)

A high-quality provider optimizes cost through efficiency, automation, and quality control—not by underpaying annotators. Annotera follows a transparent pricing structure aligned with effort, complexity, and long-term scalability.

8. Review Case Studies, Client Feedback, and Track Record

Evidence of past performance is one of the strongest indicators of vendor credibility.

Ask about:

- Case studies relevant to your industry

- Testimonials or references

- Project challenges they have solved

- Results delivered for similar datasets

- Client retention rates

Providers who have experience with large-scale, high-accuracy annotation projects are better equipped to meet enterprise demands.

9. Start With a Pilot Project Before Scaling

Even the most impressive vendor presentations cannot replace real-world evaluation. A pilot project allows you to:

- Test quality outputs

- Measure turnaround speeds

- Validate communication responsiveness

- Refine guidelines collaboratively

- Evaluate scalability

During pilots, prioritize detailed feedback loops and observe how quickly the vendor adapts to improvements. At Annotera, every engagement starts with a structured pilot to align expectations and deliver predictable outcomes.

Choosing the right data annotation outsourcing provider is one of the most critical decisions you will make in your AI development journey. The right partner not only delivers accurate labeled data but also enhances your team’s productivity, accelerates model development, and reduces overall operation costs.

By considering the checklist above—quality, expertise, security, scalability, tooling, and transparency—you can confidently identify a provider capable of supporting long-term AI success.

At Annotera, we combine human intelligence, domain expertise, rigorous quality frameworks, and intelligent automation to help AI teams build reliable, high-performing datasets at scale.

If you’re ready to collaborate with a trusted partner for your next AI project, Annotera is here to support you every step of the way.

0 comments

Log in to leave a comment.

Be the first to comment.